Thursday, January 09, 2014

A color analysis and indexing engine for Amazon images of women's dresses

It's a prototype that allows you search tens of thousands of images based on a combination of colors. All these images are women's dresses, and are directly linked to Amazon.com.

Let's see if anyone would visit this site at all.

Wednesday, June 25, 2008

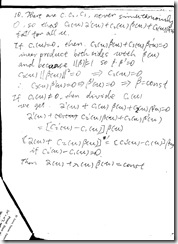

Solutions to DIFFERENTIAL GEOMETRY: A First Course in Curves and Surfaces

DIFFERENTIAL GEOMETRY: A First Course in Curves and Surfaces.

Theodore Shifrin.

It's a very concise and clear book. Very good for people who just want basic understanding of curves and surfaces. It saves a lot of blabla but covers every idea basic and important.

I only do those questions that's interesting to me, please don't copy it for your homework.

If you find better solutions, please let me know, thanks.

Solutions to Excercises 1.2, Question 13 to 18.

Monday, May 19, 2008

Worth Reading

PAMI

Volume 30, Issue 5

A Factorization-Based Approach for Articulated Nonrigid Shape, Motion, and Kinematic Chain Recovery from Video

1. Structure from Motion: reconstruct shape information from motion trajectories.

2. Previous methods assume that kinematic model is known for the articulated object.

3. Considering only affine transform, two points move independently whenever their motion trajectories matrices have full rank. If they lie on an axis (bone), then their motion matrix lose two ranks, if they are linked by a joint, then they lose one. This is the basic principle for the paper. The paper tries to find the interest subspaces of the motion matrices to identify joints and axis, so it can reconstruct the kinematic (skeleton) model.

4. Another application of factorization.

Nonrigid Structure-from Motion: Estimating Shape and Motion with Hierarchical Priors

1. Factorization-Based methods for Shape from Motion are very sensitive to noise.

2. The paper uses probabilistic methods to deal with the noise.

3. Instead of making a single prior distribution for the latent coordinates, the paper use two level of prior information, one is that the latent coordinates is Gaussian like (so the deformation of objects should be similar), the second prior information is about the motion.

4. On synthetic noiseless data, closed form solutions work well and the probabilistic solution might gets stuck on local minima, but for noisy data in the real situation, probabilistic method produces best result.

5. I think the main point of this paper is that it proposes a probabilistic framework and optimization algorithm for Shape from Motion problem.

Modeling, Clustering, and Segmenting Video with Mixtures of Dynamic Textures

1. Introduction. Dynamic Textures can be described as a stochastic process with a model of linear dynamical systems (LDS), something like the model used by linear Kalman Filtering and ARA. The paper extends this model by mixing them using a latent variable to describe the class this Dynamic Texture could be sampled from.

2. An EM algorithm is proposed to train the parameters.

3. Relation to Adaptive Filtering and Control, especially Kalman filtering and LDS is discussed.

4. The algorithm can be used in Video Clustering and Motion Segmentation.

5. For Time-Series Clustering, it is tested against three multivariate-series clustering algorithms like PCA subspace similarity, KL divergence, and Chernoff measure using synthetic data.

6. It is also tested on Real Video like fountain scene, highway traffic and pedestrian scenes.

7. This work studied a principled probabilistic extension of the dynamic texture model. Classic dynamic texture represents a single sample from a linear dynamic system space, mixture of them can represent a group.

8. It has a system identifiably problem witch I don’t understand by now.

Video Behavior Profiling for Anomaly Detection

1. Introduction. The paper addresses the problem of identifying anomalies in a CCTV video and it’s not necessary to classify them. And the paper shows that using unlabeled data is superior to using manually labeled data for the job. Manually labeling a surveillance video into different classes could be tedious and subjective.

2. The framework used in the paper includes: 1) A scene event-based behavior representation, which is different from the approaches based on object tracking. This avoids the difficulty of tracking targets under occlusion in noisy scences. And Each behavior pattern is modeled using a Dynamic Bayesian Network, which provides a suitable means for time warping and measuring the affinity between behavior patterns. 2) Behavior profiling based on discoering the natural grouping of behavior patterns using the relvant eigenvectors of a normalized behavior affinity matrix (Spectral clustering). 3) A composite generative behavior model using a mixture of DBNs. 4) Online anomaly detection using a runtime accumulative anomaly measure and normal behavior recognition using an online Likelihood Ratio Test (LRT) method.

3. Video is first separated intro small segments using static frames between them and a Event-Based Behavior Representation is extracted as in reference 8 (S. Gong and T. Xiang, “Recognition of Group Activities Using Dynamic Probabilistic Networks”

4. Discussions and Conclusions: 1) The experiments show that a behavior model trained using an unlabeled data set is superior to a model trained using the same and labeled dataset in detecting anomaly from an unseen video. The former model also outperforms the latter in distinguishing abnormal behavior patterns from normal ones contaminated by errors in behavior representation. A model trained using manually labeled data may have an advantage in explaining data that are well defined, but it does not necessarily help a model with identifying novel instances of abnormal behavior patterns as the model tends to be brittle and less robust in dealing with instances of behavior that are not clear cut in an open-world scenario. 2) The eigenvector selection-base spectral clustering algorithm is able to discovering the natural grouping of behavior patterns. 3) The online LRT-based normal behavior recognition method is superior to the conventional ML-base method.

5. Problems. Lack of semantic information. There is still a long way to go toward a general-purpose anomaly detection method that can be applied to any type of scenarios.

6. Questions. How to use domain knowledge? How to speed up the learning process with supervisions from human? How knowledge about one domain can be generalized to others?

Riemannian Manifold Learning

1. The paper focuses on Manifold Learning. Existing algorithms are ISOMAP, LLE, Laplacian eigenmaps, Hessian eigenmaps, semidefinite embedding, manifold charting, local tangent space alignment, diffusion maps, and conformal eigenmaps.

2. The problems that manifold learning algorithms have are:

a) Metric preserving problem. ISOMAP can do it by unfolding the manifold onto a flat plane and try to preserve the metric, but this would not be possible for surfaces like sphere, whose Gaussian curvature is isometry invariant and not zero.

b) Cost averaging problem. When unfolding the manifold, many existing algorithms use a uniform cost for every point on the manifold, this could lead to undesired shape of the unfolded. The paper proposes a local optimization procedure instead of a global one to avoid the problem.

c) Incremental-learning. Indeed, previous manifold learning algorithms all use batch learning, i.e. the training samples are consumed at once, adding a new sample would require redoing the whole learning process.

d) Global optimization. Global optimization is expensive.

3. Assumptions. Currently, the paper made quite restricted assumptions and they only demonstrate on 3D face data so far. For simplicity, only varying poses and lighting conditions are considered in this model, as they are the most important factors in face recognition. We require that the distance from the camera to the face and the focal length of the camera are fixed so the acquired images have similar scales. The camera is allow to rotate around its optical axis.

Monday, May 12, 2008

Worth Reading

PAMI

Volume 30, Issue 1

Groups of Adjacent Contour Segments for Object Detection

1. Introduction. Many existing recognition algorithms use local patches (color cue) instead of contour features. This paper proposes a scale-invariant local shape features formed by chains of k connected roughly straight contour segments.

2. The shape represented by k-AS increases with k, as 2-AS can be L shapes, 3-AS can form C, F, and Z shapes.

3. Following a bag-of-features paradigm, they construct a codebook of k-AS types

4. Related Work. Problem to existing solutions: clutter and restricted class of shapes. A natural thought is that people can use boosting to select edge patterns for classification (not scale invariant and tailored to specific class, dataset).

5. Split the detector window into separated tilts and compute histograms in each tilt so as to form a descriptor.

6. Use of Integral Histograms for speeding up the sliding-window detector based on kAS histogram.

7. Conclusion. Future work, Multiple View. Rotation invariant. More complex framework.

Locally Rotation, Contrast and Scale Invariant Descriptors for Texture Analysis

Yet another feature descriptor.

Mixture of Spherical Distributions for Single-View Relighting

1. Assumptions. Given a 3D model of the object, the method can recover the direction and intensity of ultiple light sources and the number of light sources and specular reflectance property of the object. Previous methods assume 1) all light sources are infinitely distance (directional) 2) the geometry of the target object is known, 3) number of light sources known.

2. To estimate the parameters for the light sources, the paper use EM algorithm.

LEGClust -A clustering Algorithm Based on Layered Entropic Subgraphs

1. This clustering algorithm uses local structure information of the data, so it can be used to cluster Swiss Roll like data.

Mutual Information for Lucas-Kanade Tracking (MILK): An Inverse Compositional Formulation.

Classical LKT algorithm uses SSD as the distance measure for two images, this paper proposes Mutual Information as a different measure. And it also propose an efficient algorithm based on Back Composition. Source code available.

Sunday, May 11, 2008

Notice the Invariance Property of Maximum Likelihood Estimation!

Friday, May 09, 2008

All comps passed, GPA 4.0, not bad.

Another Brain Workout. Matrix Rank Problem

**********************************************Wei

Suppose X is a random data matrix with dimension n by t

M=inv(X ' * X), i.e. M is the inverse of product of (transpose X and

X)

and P=I-X * M * X'

I is an identity matrix (n by n), is rank (P) = n-t?

I wrote a testing MATLAB program to verify it.

%%%%%%%%%%%rank_test.m

for n=7:15

for t=2:7

X=randn(n,t);

M=inv(X'*X);

I=eye(n,n);

P=I-X*M*X';

r=rank(P);

fprintf('the rank of p at n=%d, t=%d is %d\n',n,t,r);

end

end

the rank of p at n=7, a=2 is 5

the rank of p at n=7, a=3 is 4

the rank of p at n=7, a=4 is 3

the rank of p at n=7, a=5 is 2

.........................

%%%%%%%%%%%%%%%%%

So far, I vaguely feel this identity is right by calculating the trace

tr(P)=tr(I)-tr(X * M * X')=tr(I)-tr(X'*X*M)=n-t

Thanks

*******************************************Robert Israel

> And, I verified that X does not have to have full rank by setting some

> rows of X to trivial one. It doesn't affect much except for occasional

> MATLAB complaining for singularities.

> > Hum.... There ARE some exceptions. I think it's due to the numerical

> > subtlety.

> > On May 8, 10:58 am,

> > > Suppose X is a random data matrix with dimension n by t

> > > M=inv(X ' * X), i.e. M is the inverse of product of (transpose X and

> > > X)

> > > I is an identity matrix (n by n), is rank (P) = n-t?

Thus Ker(P) = Ran(X). P is the orthogonal projection on the

orthogonal complement of Ran(X), so its rank is n - rank(X).

**********************************************Wei

OK. Dumb as I am, I think I've figured out your solution:

Because PX=0, Thus Ker(P) \inc Ran(X)

And, because for any x, Px=0, x is included in Ran(X)

so Ker(P) is included in Ran(X)

Thus Ker(P)=Ran(X).

Because of theorem (Dimension of kernel of A ) + (dimension of range

of A )=(dimension of U).

We get the answer.

Tuesday, April 29, 2008

Worth Reading

comment: this paper provides a very good review of part-based objection recognition algorithms and it reveals some problems of existing algorithms: the dataset they use tend to be oversimplified. And it points some more difficult real world dataset for further research.

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)